Earlier chapters connected knowledge-graph construction, core neuro-symbolic algorithms, and distributed cloud–edge compute. Deploying models on servers is not the same as operating a traffic governance system. A common engineering line: models end the feature pipeline but begin the system.

This closing chapter asks how to organize prior technical pieces into a usable platform: modular design, unified flows, and multi-role interfaces—from stacking isolated algorithms to a governance closed loop aligned with real operations.

21.1 Integrating Knowledge Substrate, Reasoning Models, Certification, and Deployment#

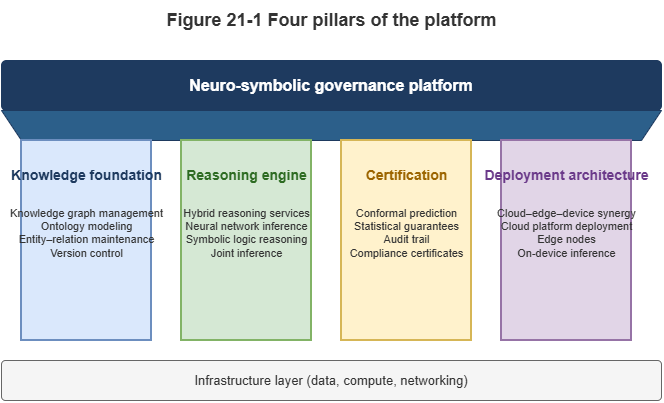

A complete neuro-symbolic trustworthy governance platform rests on four pillars:

Knowledge substrate: SkyKG as shared memory and statute store—static urban grids plus live dynamic temporal graphs of aircraft and airspace.

Reasoning models (cognitive engines): Fast GNNs and slow LLMs mounted on the substrate, extracting situational features from sensors and performing spatiotemporal conflict prediction.

Certification (trust guardrails): Conformal prediction and statistical monitoring wrap models—predictions pass calibration and violation checks before reaching actuators.

Deployment architecture (physical plumbing): Cloud–edge middleware (e.g., high-concurrency graph engines from Chapter 20) meets hard latency constraints across city 5G sites and core datacenters.

Integration is not a linear pipeline glue-up but system-level decoupling and recomposition—the full “trustworthy, controllable, explainable” pattern advocated in this book.

21.2 Platform-Oriented Modular Design#

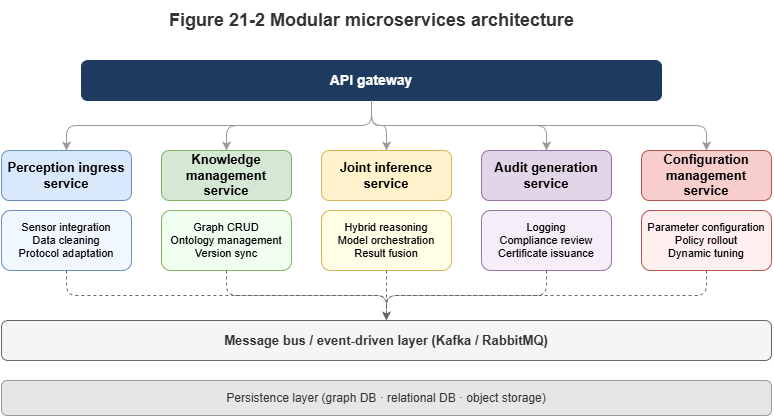

Fast-evolving aircraft hardware and algorithms favor microservice-style modularity:

Perception and fusion ingress: Heterogeneous ADS-B, radar, telco feeds—cleaning, entity alignment, translation to temporal triples the graph engine consumes.

Rules and knowledge management: Visual KG editing for planners—airspace ceilings, priority policies—compiled to Datalog or SPARQL constraints downstream.

Neuro-symbolic joint inference: Algorithm plugin APIs so developers can swap an older GCN for a newer spatiotemporal Transformer without rewriting business rules.

Compliance certification and audit generation: Package every inference outcome with evidence chains, model versions, and conformal intervals in tamper-evident semantic logs.

21.3 Unifying Data Flow, Control Flow, and Evidence Flow#

Classic digital traffic stacks track data flow (bottom-up sensing) and control flow (top-down intervention). Neuro-symbolic trustworthy platforms add a third:

Data flow: What the world is doing—high-rate trajectories, weather fields—continuously refresh the ABox.

Control flow: What the system wants the world to do—route changes, hover commands, airspace releases—down to autopilots.

Evidence flow: Why this control was issued—parallel to every control action, record triggering rule identifiers, neural attributions, and confidence labels.

Data drives models; models emit controls; evidence flow binds causality for ledgers or regulatory databases—decision transparency end to end.

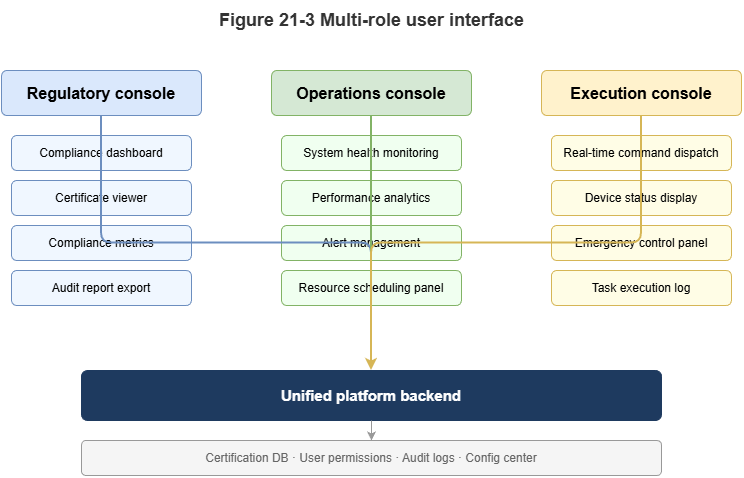

21.4 Multi-Role Interfaces: Regulators, Operators, Executors#

A viable platform speaks different languages to its ecosystem:

Regulators / ANSPs: Macro digital twin views and audit probes—risk heatmaps, capacity saturation—not raw tensors. Natural-language drill-down (LLM-assisted) into deviation compliance chains for spot checks.

Operators (logistics, inspection fleets): Commercial scheduling APIs—local optimization for routing, cost, and capacity inside platform hard rules and safety floors.

Executors (autopilots): Ultra-low-latency machine commands—e.g., MAVLink-style encrypted, authenticated waypoints and avoidance vectors.

21.5 From Research System to Industry Platform#

Crossing the valley of death to an industry platform stresses:

High availability and disaster tolerance: Redundancy and Byzantine-tolerant patterns—when an edge rack fails, neighbors seamlessly adopt its subgraph workloads toward four-nines-class uptime targets.

Standardization and airworthiness alignment: Interfaces toward UTM concepts of operations; symbolic engines mapped to DO-178C-style software assurance for commercial scale-out.

Human-in-the-loop: Even with automatic deconfliction, extreme concept drift (e.g., unprecedented severe weather) should smoothly escalate annotated risk bundles to human final authority.

21.6 Open Ecosystem and Reproducible Experiments#

AI progress depends on open tooling. This design favors open frameworks, proprietary industry data:

Benchmarks: City-sim low-altitude neuro-symbolic datasets—complex weather, dense multi-agent squeeze—for stronger GNNs or rule induction.

Open toolchain: SkyKG base ontology schemas and scripts from sensors to temporal graphs.

Appendix C labs: Readers can start from a 10-UAV rule engine, add LLM explanations and conformal prediction, and miniaturize a cloud–edge governance loop.

From scattered perceptual neurons to explicit symbolic law; from cloud strategy to edge milliseconds; from code piles to evidence-backed safety—this completes a trustworthy neuro-symbolic governance stack for urban low-altitude traffic: a key to today’s deployment bottlenecks and a path toward higher autonomy tomorrow.

Chapter Summary#

This chapter covered platformization: integrating substrate, models, certification, and deployment as one stack; microservice modularity for ingress, knowledge, joint inference, and audit; data + control + evidence unification; multi-role interfaces; industrial HA, standards, and human-in-the-loop; and open benchmarks/tooling with a path from Appendix C exercises to a miniature closed loop.

Key Concepts#

Platformization: Turning knowledge, reasoning, certification, and deployment into a reusable operational system.

Modular design: Service boundaries that allow independent evolution without fragile coupling.

Three-flow unification: Joint governance of data, control, and evidence.

Multi-role interfaces: Different abstractions for regulators, operators, and aircraft.

Governance closed loop: Sense → assess risk → act → retain evidence continuously.

Exercises#

Why does “deploying several models” fall short of a governance platform?

Why should evidence flow be co-equal with data and control flows in platform design?

Without HA and human-in-the-loop, where does a research prototype most often stall on the path to an industry platform?

Case Study#

End-to-end municipal low-altitude mission: application → rule review → dynamic graph alert → control issuance → audit retention—showing substrate, engine, and certification as one business chain.

Figure Suggestions#

Figure 21-1: Four-pillar integration architecture.

Figure 21-2: Modular services—ingress, knowledge, joint inference, audit.

Figure 21-3: Multi-role interfaces—regulator, operator, executor.

Formula Index#

Platform architecture focus; no core mathematical derivations.

Index: knowledge substrate, reasoning models, certification, deployment, data/control/evidence flows.

References#

RTCA (2011). DO-178C: Software Considerations in Airborne Systems and Equipment Certification.

Newman, S. (2015). Building Microservices: Designing Fine-Grained Systems. O’Reilly Media.

Kopardekar, P., Rios, J., Prevot, T., Johnson, M., Jung, J., & Robinson, J. E. (2016). Unmanned Aircraft System Traffic Management (UTM) Concept of Operations. AIAA Aviation Technology, Integration, and Operations Conference.

Shi, W., Cao, J., Zhang, Q., Li, Y., & Xu, L. (2016). Edge Computing: Vision and Challenges. IEEE Internet of Things Journal, 3(5), 637–646.

Vogels, W. (2009). Eventually Consistent. Communications of the ACM, 52(1), 40–44.